Case Study: Evaluating Precision and Spatial Perception

in VR-Based Surgical Training

Project Type

UNI PROJECT

Role

UX Researcher (Human Factors)

3D Modeling & VR Scene Development

Published Paper

Hammering Away - Influence of Hammer Weights on Positional Hammering Accuracy in Virtual RealityResults

Participants completed the tasks in VR, revealing perceptual differences that point to areas for improvement in real life and in surgical training.

Goal

Evaluate how accurately people perform high-force hammering tasks in virtual reality compared to real life, focusing on precision and spatial perception.

Which Tools

- Blender

- Substance 3D

- VS Code

- Python

My Contribution

Manual analysis would take 40+ hours, so I built an analysis sheet in 30 seconds each, saving 90% of the time.

Problem Space

Surgical accuracy, especially during high-force tasks like hip implant hammering, is critical. Traditional VR training systems lack validation for realistic force feedback and spatial precision, particularly in high-impact scenarios.

This project explored: Can users perform accurate, real-world hammering actions based only on VR visual feedback?

Research Approach

- Measure and compare hammering precision in VR vs. real conditions.

- Evaluate how hammer weight and target position impact spatial accuracy.

- Identify perceptual distortions and their implications for VR-based surgical training.

Experimental Setup

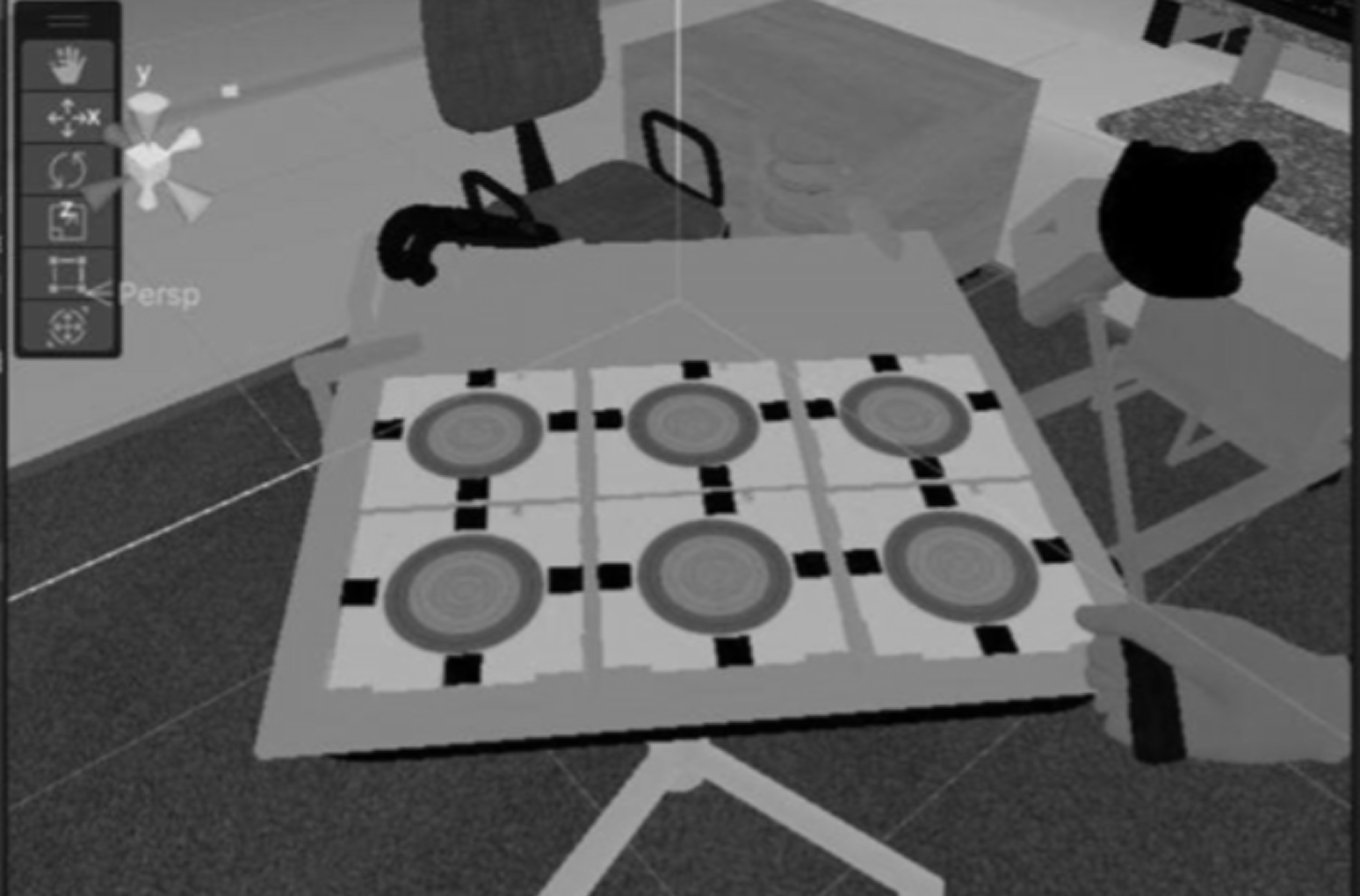

- Built a physical and VR environment with 6 target zones.

- Designed custom hammers (300g, 600g, 900g), each fitted with a needle tip and VR tracker.

- Simulated hammering on targets filled with kinetic sand for safety.

Studies Conducted

- Hammer weight study: Tested precision using hammers of three different weights.

- Target position study: Tested accuracy across six spatially varied target zones.

VR VS. REAL ENVIRONMENT

Each participant completed tasks in both VR and real environments, allowing within-subject comparison.

Data Collection & Analysis

- Real-time positional data captured using Unity + HTC Vive + Vive Tracker.

- Developed a custom Python + OpenCV tool to semi-automatically evaluate millimeter-level accuracy on paper target sheets.

- Applied MANOVA and descriptive statistics to analyze spatial results.

Improving Evaluation Efficiency

As part of the study, we needed to analyze thousands of hammer strikes on paper target sheets to measure spatial precision. Originally, this would have to be done manually.

Each sheet was estimated to take approximately 10 minutes to process. With 33 participants and multiple sheets per participant, the manual approach would have resulted in a time-consuming process taking over 40 hours.

My UX Research Contribution

To save time and reduce error, I proposed and built a semi-automated evaluation tool using Python and OpenCV, designed with usability, speed, and control in mind. With this approach, the processing time per sheet was reduced to just 30 seconds, resulting in a time savings of over 90%, and allowing the team to complete the analysis in a fraction of the original time.

New Time Per Sheet: ~30 Seconds

Time Saved: Over 90%